Which popular AI chatbots hallucinate the most?

Some AI chatbots hallucinate more than others, while some hallucinate less.

Which AI chatbot hallucinates the most? | Image by PhoneArena

AI chatbots are not perfect as they will sometimes hallucinate. To put this in layman's terms, AI Large Language Models (LLMs) are trained to use patterns to predict the next likely word in a series.

Why AI chatbots hallucinate

If the model cannot find a specific pattern to answer a question, it will still try to answer it by putting together words that make sense from a statistical basis even if it is incorrect factually. This is why AI models still need a human to verify data such as stock prices, names, and dates. The AI model isn't at fault when it hallucinates as it is still following what it was programmed to do even if the data isn't there for a response to be made.

25% of American workers are now using AI regularly, which means it is important that you know which chatbots are prone to hallucinating, and which models are often not there when you require them to be. This info was gathered by Legal Guardian Digital, an SEO company for law firms.

The chatbot that hallucinates the most is revealed in this survey

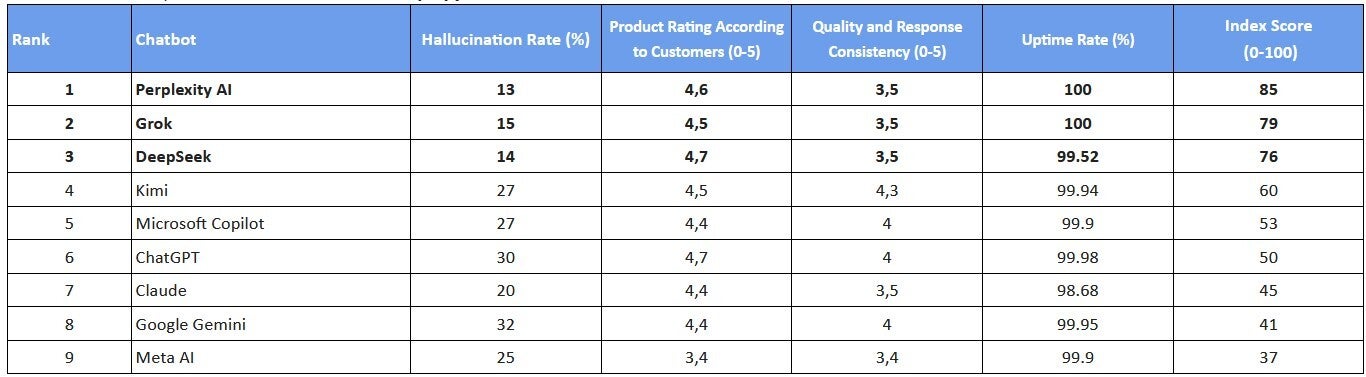

The study looked at which popular AI chatbots can be trusted the most by showing how regularly each model gave false information, which ones had higher customer satisfaction scores, and which ones stayed available for users without crashing. Each of these variables were used to calculate an index score (0-100).

Do you rely on responses from AI chatbots?

The chatbot in the ratings with the highest hallucination rate is Google Gemini. The report says that Gemini is "tripping" on 32% of its replies, which might make some inside Apple a little nervous. The tech giant is reportedly paying Google at least $1 billion annually to use a custom 1.2 trillion parameter Gemini LLM to power the Siri chatbot expected to arrive with iOS 27 later this year.

ChatGPT was the next chatbot likely to hallucinate with this happening in three out of every 10 responses. The chatbot less likely to hallucinate is Perplexity AI with mistaken answers reaching users only 13% of the time. China's DeepSeek and Elon Musk-owned Grok were the next two models less likely to come up with bogus responses with hallucination percentages of 14% and 15% respectively.

Ranking the top AI chatbots. | Image by Legal Guardian Digital

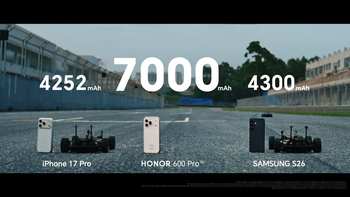

To put this in even more simple terms, giving an inaccurate response 30% of the time, ChatGPT is twice as apt to respond to your query with a reply that is incorrect than Deep Seek. Remember, DeepSeek was trained for a fraction of the cost that was spent to train ChatGPT.

Two chatbots did not go down at all during the survey

DeepSeek and ChatGPT both had the highest customer satisfaction score of 4.7 (out of 5) followed by the 4.6 score generated by Perplexity AI. The lowest score of 3.4 belonged to Meta AI, while three models were next with 4.4 scores.

When it comes down to the consistency and quality of responses from these LLMs, on a scale of 0-5, Kimi AI scored the highest in this category at 4.3. Tied with a score of 4 were ChatGPT, Copilot, and Gemini, while Meta AI was last with a score of 3.4.

Only two of the chatbots were up and running at all times and those were Perplexity AI and Grok. This is important because no matter how accurate the AI model is that you're using, if it is down, that accuracy doesn't matter. ChatGPT (up 99.98%) and Gemini (up 99.95%) were among the chatbots that were practically up all of the time.

The top ranked chatbot and the one with the lowest score

These models very rarely went down as the lowest Uptime rate, still a very reliable 99.68%, belonged to Claude.

The Index Score, which runs from 0-100, is based on all the aforementioned variables. The top ranked chatbot, with a score of 85, was Perplexity AI (iOS, Android). Grok was next (iOS, Android) with a score of 79 followed by DeepSeek (iOS, Android).

Follow us on Google News

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: