Your social-media photo could soon have you starring in a porn video

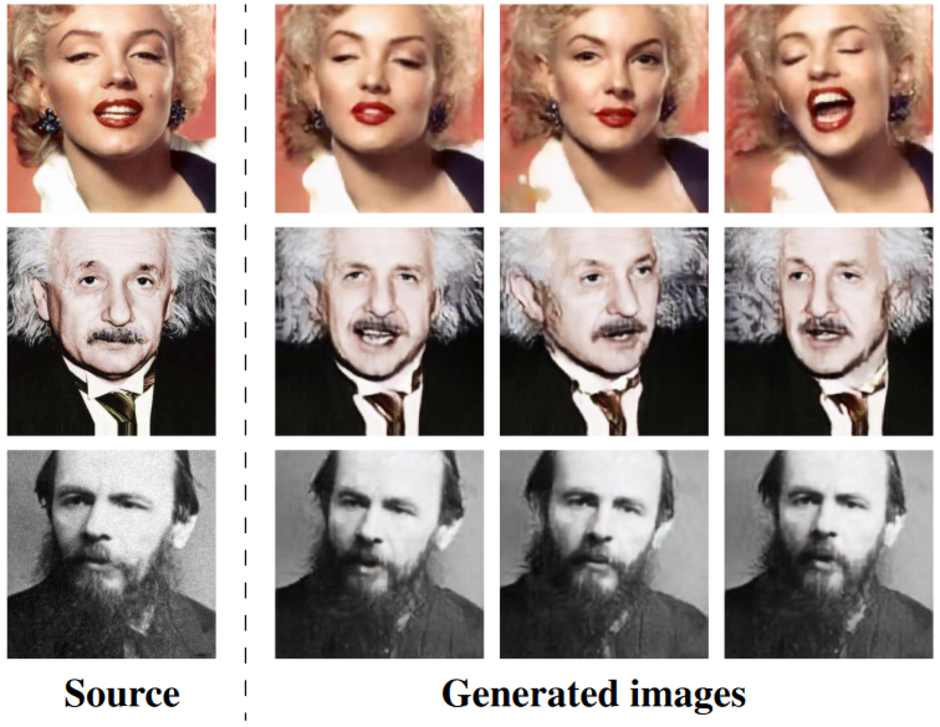

A "Deepfake" video is one created with several photos of a subject. Using AI, these pictures can be used to create a video of the subject doing something that he or she never did. But Samsung has taken this technology and made it even more lethal; now, a Deepfake video can be created from just a single photograph. Samsung's AI lab in Russia developed the technology, which can take a picture of the Mona Lisa and turn it into a realistic video of her talking, moving her head and blinking her eyes.

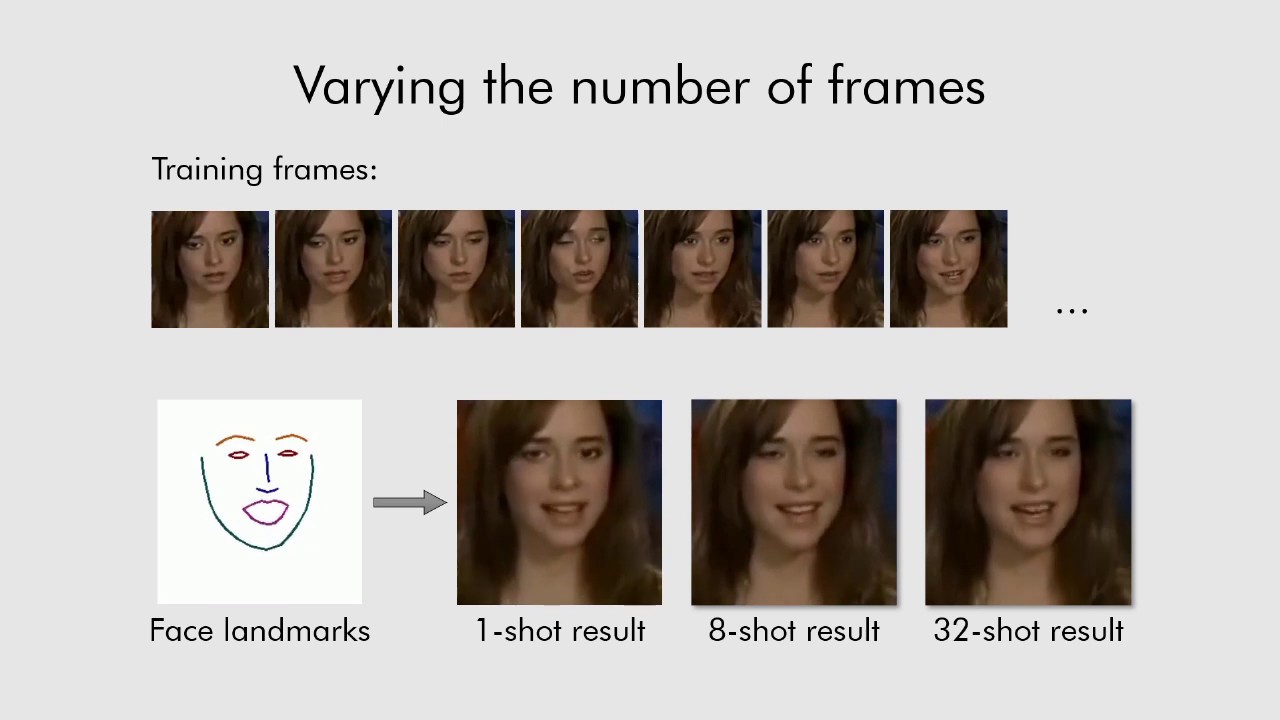

This is done by first training the system to learn how a human face moves by watching a large number of videos. It then applies what it has just learned to a single photo or a small number of images to create the clip. While a subject's facial landmarks are used to help create realistic facial movements, there is a drawback; videos made from a small set of images don't provide the same detail as those created from a larger number of photographs. For example, Deepfake video of Marilyn Monroe created in Sammy's lab missed the film icon's famous mole. A video created by the researches includes an example showing that a Deepfake clip made from a single photo doesn't have the same detail seen on videos created using eight and 32 photos.

"Following the trend of the past year, this and related techniques require less and less data and are generating more and more sophisticated and compelling content. These results are another step in the evolution of techniques ... leading to the creation of multimedia content that will eventually be indistinguishable from the real thing."-Hany Farid, researcher, Dartmouth

Right now, there are glitches in this system that can make Deepfake video created from a single photo look fake. But eventually, not only will these glitches be smoothed over, the technology will undoubtedly end up in the hands of criminals looking to score some blackmail money, or put politicians they don't like into compromising positions that never happened. And with the speed at which AI is advancing, these issues could pop up sooner than you might think.

Deepfake examples created by Samsung

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: