Is HDR+ on the Pixel the same as HDR+ on the Nexus? No, it's new and evolved!

The "old" HDR+

The HDR+ on the Nexus was touted for being able to consistently produce sharp images with no blurred moving objects. Just like your typical HDR, the phone would take a few stills at when the user presses the shutter button. It would then analyze and pick the "sharpest" / "luckiest" shot out of the bunch in order to use it as a main reference point. The rest of the pictures are used as layers to assist in the compression of a dynamic scene. HDR+ would also actively look for stills where any moving objects within the photo are the least blurred, and would crop them out and superimpose them over the main photo for a crisper result. While the typical HDR technique takes a few images at different exposures, HDR+ takes consistently underexposed stills in order to avoid highlight burns and grainy shadows.

Growing up

Conventional camera software (left) vs. Pixel HDR+ (right). Image courtesy of Marc Levoy

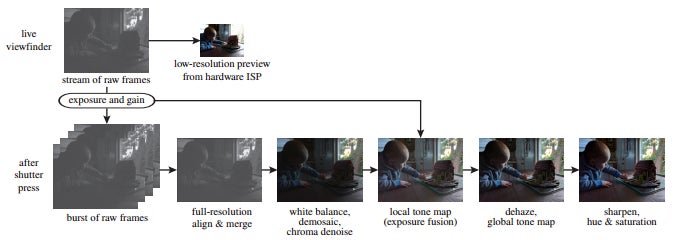

From then on, it still covers the basics of the Nexus — when you press the shutter button, the Pixel takes 2 to 10 burst photos, depending on lighting conditions and dynamics within the frame — the more challenging a photo is, the more burst shots the phone needs to make. Unlike conventional HDR, which takes a few photos at different exposure settings before stitching them together, the Pixel's camera shoots stills at a constant exposure, which is purposefully set to be lower than usual. This helps for the later stages, by minimizing highlight blowout and graininess in shadows. After this, the phone looks for the sharpest ("luckiest") shot from the burst to use as the "main" image for the photo and the rest of the frames are aligned to it and used as layers to enhance it. In order to minimize the feeling of shutter lag, the Pixel will only pick a reference image from the first 3 shots in the burst.

Image courtesy of Marc Levoy

HDR+ Auto is more like a "lite mode"

Also, there is a small difference between HDR shots when you are in HDR+ Auto or HDR+ On. The latter mode is more "dedicated" to taking as many shots as it can, before combining them. It suffers from a slightly longer shutter lag, but comes up with brighter, more balanced shots. HDR+ Auto is like a "lite" mode — of course, it will only engage in dynamic scenarios, and it will be much faster to snap a photo. However, the resulting picture will be a bit less-balanced than one taken with HDR+ On.So, there you go. The HDR+ on the Pixel is kind of the same like the one on the Nexus before it, only more evolved... and super-powered.

Follow us on Google News

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: