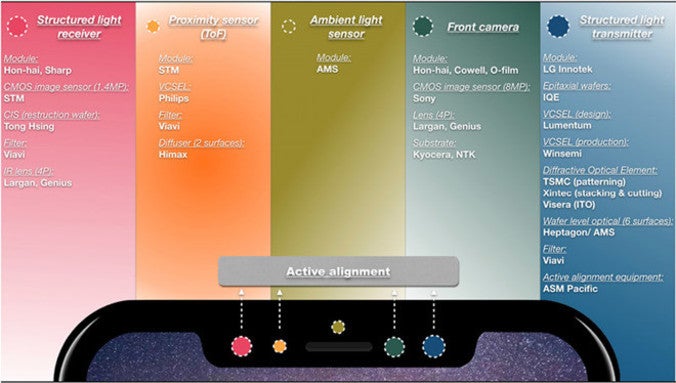

The sensors used for Face ID

Apple will introduce its 2017 iPhone models on September 12th, and a leak found in the Gold Master version of iOS 11 suggests that the new phones will be known as the Apple iPhone X (the tenth anniversary edition), the Apple iPhone 8 (sequel to the iPhone 7) and the Apple iPhone 8 Plus (sequel to the iPhone 7 Plus). Pre-orders could start on September 15th with all three models set for a September 22nd launch.

Earlier today, we showed you a video

that revealed how Apple iPhone X users will set up Face ID so that the facial recognition system can unlock their phone or verify their identity.

The man who called Apple's facial recognition system (which involves a 3D sensing front-facing camera) "Revolutionary" back in February, is now weighing in on the technology employed by this feature. KGI Securities' Ming-Chi Kuo, considered to be one of the best Apple analysts in the business, sent out a note to clients today showing them exactly how Face ID works.

Kuo says that Face ID relies on four components including a structured light transmitter, a structured light receiver, a front-facing camera, and a time of flight/proximity sensor. The sensors that are used for Face ID are found in the "Notch" at the top of the display. The structured light sensors collect depth information which is combined with images taken with the front-facing camera to produce 2D images. Using software algorithms, a 3D image is created and that image is used for facial recognition. The proximity sensor can warn iPhone X users when they are holding the handset too far away, or too close to the user's face to produce an accurate 3D image. To make sure that these sensors work perfectly out of the box, they all must be aligned before final assembly.

According to Kuo, all iPhone X models (expected in black, white and gold) will have a black coating on the front glass in order to hide the sensors involved with the four components. The ambient light sensor supports the True Tone display technology originally introduced in 2016. Using the ambient light sensors, the temperature of the colors on the phone's screen is changed. Kuo says that this will improve the performance of the 3D sensing feature.

Apple turned to facial recognition once it became obvious that the edge-to-edge screen on the iPhone X would prevent the use of a Touch ID button on the phone. And current technology won't allow Apple to embed a fingerprint scanner under the display at this time. Kuo doesn't see either Apple or Samsung offering such a feature

until the 2018 Samsung Galaxy Note 9 is released next year.

source:

AppleInsider

Read the latest from Alan Friedman

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: