Latest test reveals the top virtual digital assistant; do you have it on your device?

Every so often, someone feeds a number of virtual digital assistants a number of questions to determine which one is the best. Among the four major AI-based helpers, Google Assistant usually comes out on top with Apple's Siri, Amazon's Alexa and Microsoft's Cortana ranking behind the leader. And this seems to be the case regardless of whether the testing is done on a smart speaker or a smartphone.

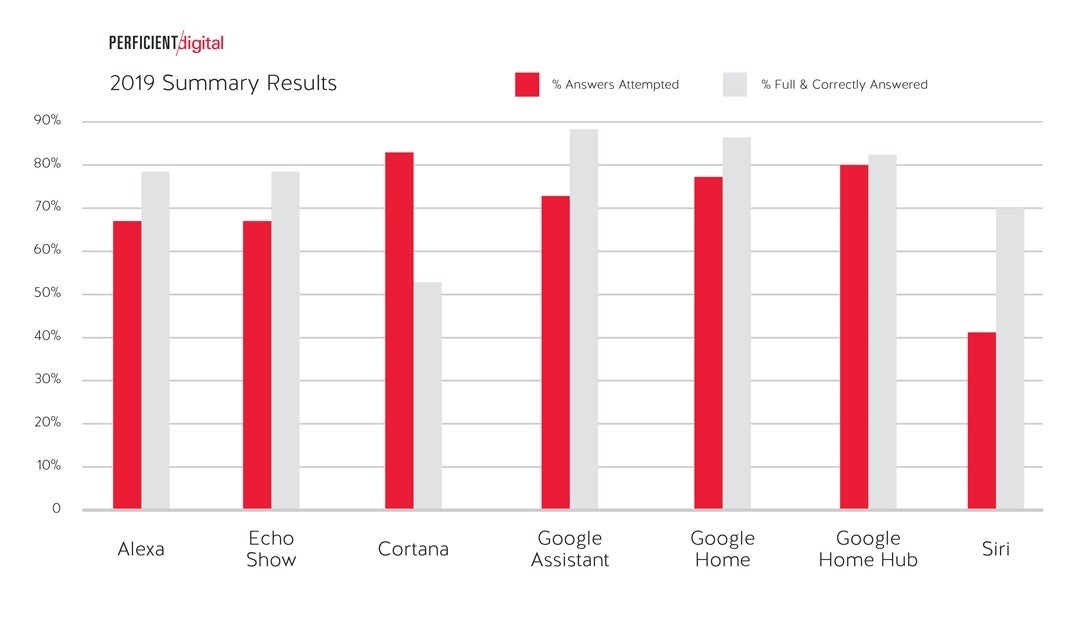

Last week, Perficient digital conducted the most recent battle of the virtual assistants and asked 4,999 questions to Alexa, an Echo Show, Cortana, Google Assistant on a Google Home, Google Assistant on a Google Home Hub, Google Assistant on a Smartphone and Siri. The scoring was based on a number of factors including whether the response was made verbally, if the answer came from a database or from a third-party source, if the assistant did not understand the question, and if the answer was just flat-out wrong.

You're almost better off flipping a coin to get a correct response instead of relying on Cortana

The results? Google Assistant on a smartphone answered nearly 90% of the questions thrown at it "fully and correctly." Perficient explains that if it asked one of the virtual helpers "How old is Abraham Lincoln?" and was shown only his birthdate, that would not be a fully correct answer to the question. Google Assistant on the Google Home smart speaker was next with an 85% accuracy score and Assistant on a Home Hub finished third with a score of 82%. Siri followed with a 70% score with Alexa (67%) right behind. Cortana's score, slightly better than 50%, means that you're almost better off flipping a coin to get an answer.

Google Assistant is the top virtual digital helper in a new test

Interestingly, Cortana did attempt to answer the most questions which could partially explain the low accuracy score. Perficient explains this as the percentage of questions where "the personal assistant thinks it understands the question and makes an overt effort to provide a response. This does not include results where the response was 'I'm still learning' or 'Sorry, I don't know that' or responses where an answer was attempted, but the query was heard incorrectly."

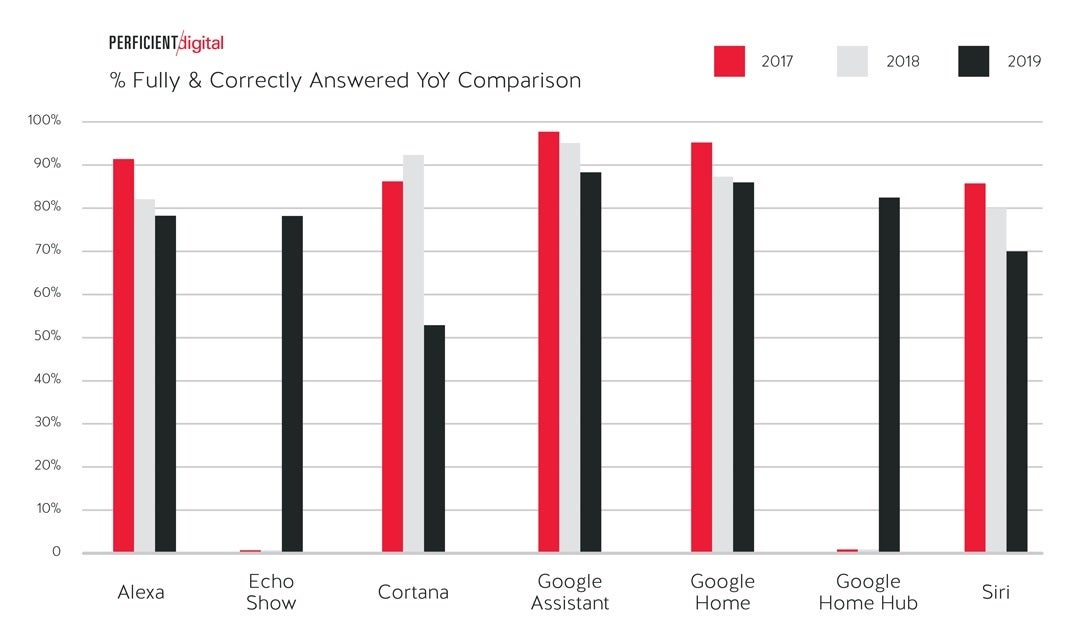

Google Assistant has answered the most questions fully over the last three years

This is the third year that Perficient has run a similar test and looking at the results of the last three years one might get the impression that digital assistants have been getting dumber. Outside of Cortana, which fully and correctly answered over 90% of the queries thrown at it last year, the digital helpers taking part in all three contests have seen their score decline each year. What hasn't changed over the three years are the results at the top. The smartphone version of Google Assistant consistently beats out the other contenders.

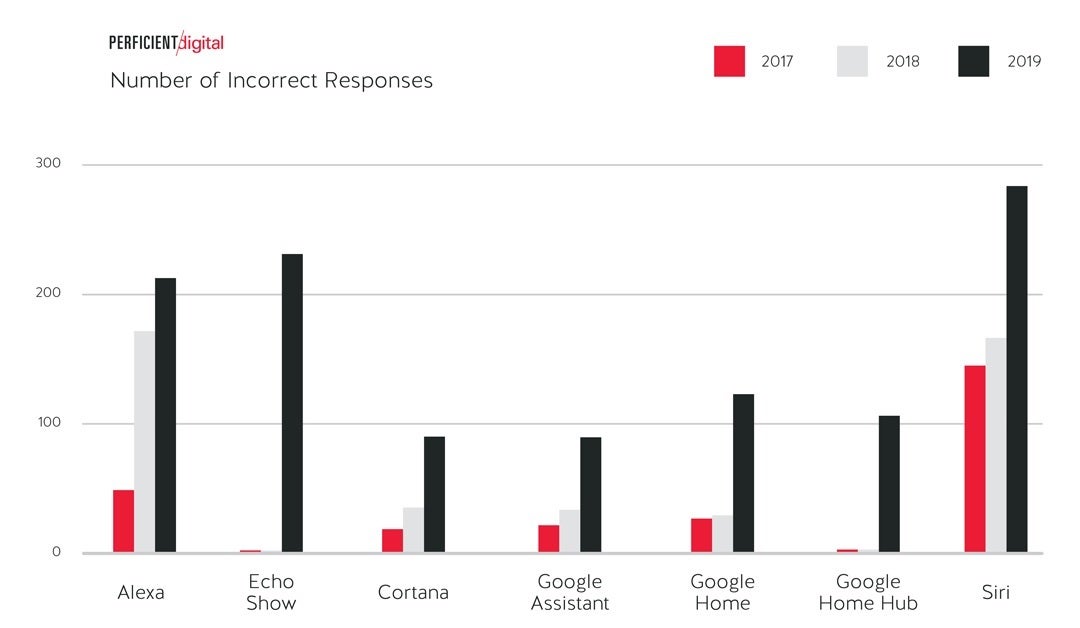

Siri answered the most questions incorrectly the last two years

During this year's testing, Siri had the most incorrect answers amounting to nearly three times the number of incorrect responses from the smartphone version of Google Assistant. Accord to Perficient, about a third of the wrong answers from Siri and Alexa, came from questions that it considered to be "poorly structured." An example would be "What movies do The Rushmore, New York appear in?" Additionally, most incorrect responses were so obviously wrong that a user would know not to believe the answer.

If you're curious about which of the personal assistants has the best sense of humor, the test shows that Siri and Alexa are neck and neck at the top. As an example, when Siri was asked "Where is Waldo?," Apple's digital helper responded, "He's well hidden. I can't see him." The most serious of the assistants was Cortana.

Lastly, Siri and Alexa used "snippets" the least. These are responses sourced from a third-party and are generally attributed to them. The overall trend among virtual assistants is lower but Google Assistant (on every device), by far, delivers such answers the most.

Incorrect and funny assistant responses

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: