Instagram introduces automatic blocking of offensive comments

The filter will work both in posts and live video, and will block comments it finds abusive or offensive. Together with it, a new spam filter is also being introduced, and both of them will be powered by machine learning, which also explains why the company didn't elaborate on what it finds offensive.

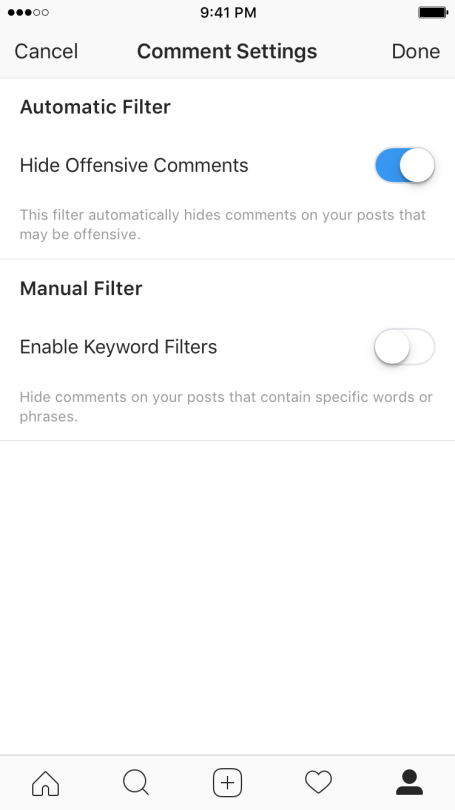

But while the offensive comment filter will initially only be available in English, the spam filter will also work in eight additional languages: Spanish, Portuguese, Arabic, French, German, Russian, Japanese and Chinese. Since Instagram specifically mentions that the algorithms will "improve over time," expect them to be a little rough around the edges. But if you'd rather not trust a computer's judgement on what is and isn't offensive, the feature can optionally be disabled in the app's settings.

source: Instagram

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: