Google Assistant and Alexa have trouble understanding certain accents

The Washington Post recently tested the listening acumen of the top two virtual personal assistants currently available. And we would be referring to Google Assistant and Alexa. Almost universally, the pair are considered the best digital helpers around today. But even Google Assistant and Alexa may not be able to understand the commands being barked at them by users. To see how this plays out in real life, The Washington Post conducted a test using Google Home and Amazon Echo smart speakers.

To see how this plays out in real life, a test was conducted by Globalme, a language and tech company, and 70 commands (such as "Start playing Queen," "Add new appointment," and "How close am I to the nearest Walmart?") were spoken to the two personal assistants. The AI employed by the assistants is "taught" to understand accents by listening to many different voices. The AI figures out patterns and connections between words and phrases.

"The more we hear voices that follow certain speech patterns or have certain accents, the easier we find it to understand them. For Alexa, this is no different. As more people speak to Alexa, and with various accents, Alexa’s understanding will improve."-Amazon

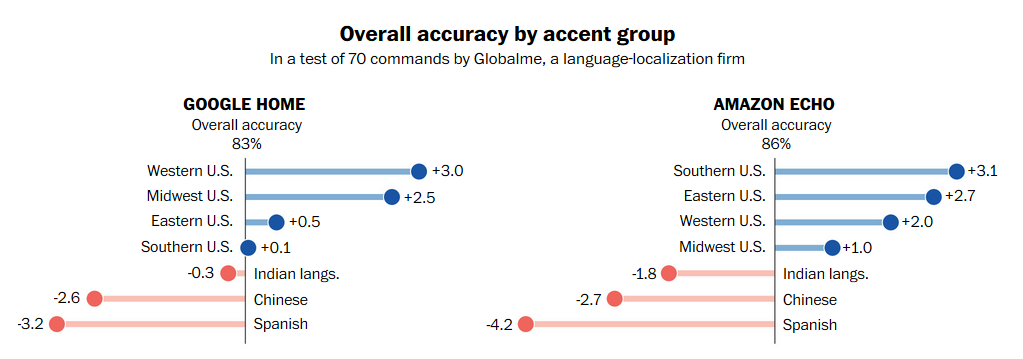

Google Assistant had an overall accuracy rate of 83% while Alexa was a little better at 86%. While Alexa did a little better with U.S. accents (+1% to +3.1%) than Google Assistant (+.1% to +3%), it did a little worse with those who speak Indian, Chinese and Spanish as their first languages. Asking the assistants to control streaming entertainment on the Google Home or Amazon Echo also resulted in a huge gap based on accents. Google Home did the best with users from the Eastern part of the U.S. (91.8% accuracy), and the worst with those who speak Spanish as their first language (79.9%). Alexa performed the best with those from the Southern U.S. (91%), and the worst with those whose natural language is Chinese (81%).

How well Google Assistant and Google Home understand certain accents

source: WashingtonPost

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: