Facebook will tell you exactly why some COVID-related posts you've interacted with got removed

Social media giant Facebook has updated its news article that was originally published back in April 16, aptly named "An Update on Our Work to Keep People Informed and Limit Misinformation About COVID-19".

As the name suggests, it resolves around Facebook stating that it is working with health expects to keep "harmful misinformation about COVID-19 from spreading" on Facebook apps, which include Facebook Messenger, WhatsApp and Instagram.

This most visibly involved showing Facebook users messages under each COVID-related post, noting its authenticity and providing users links to a credible source on information about the pandemic.

As of yesterday, December 15, Facebook has decided to redesign such messages "as more personalized notifications to more clearly connect people with credible and accurate information about COVID-19".

According to Facebook, now its users will:

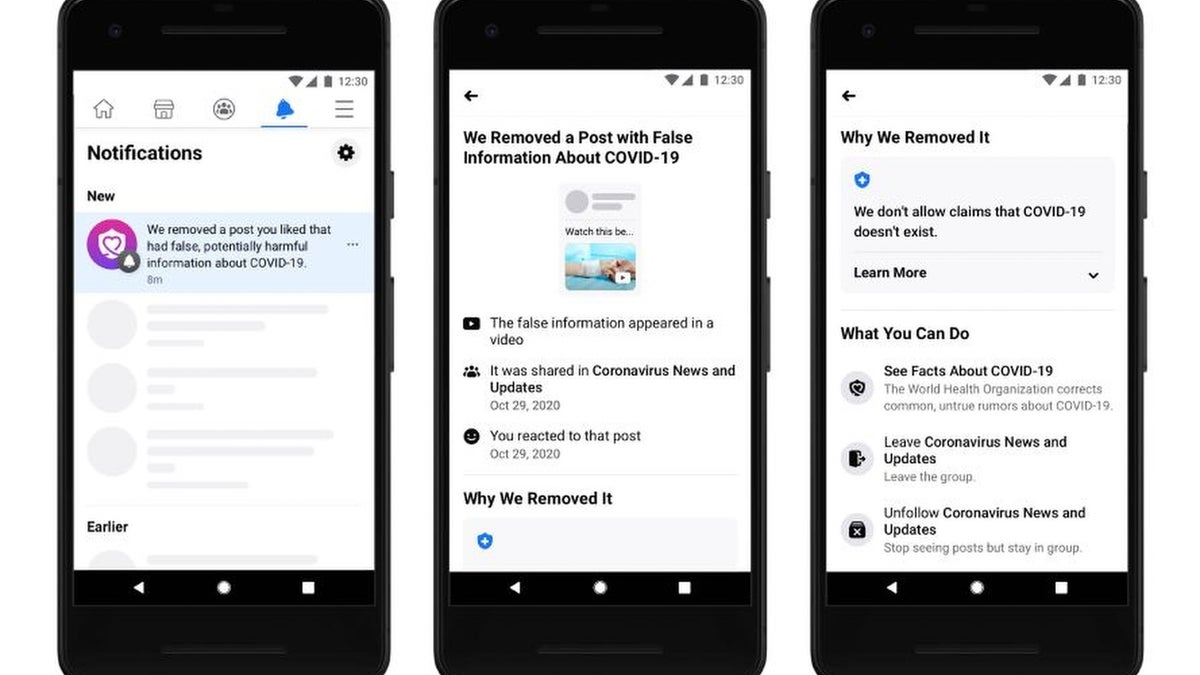

Facebook users can now expect notifications regarding removed COVID-related posts that they've liked or otherwise interacted with, for better transparency. Facebook will also point out why exactly a post was removed (for example "We don't allow claims that COVID-19 doesn't exist").

This most visibly involved showing Facebook users messages under each COVID-related post, noting its authenticity and providing users links to a credible source on information about the pandemic.

According to Facebook, now its users will:

- Receive a notification that says we’ve removed a post they’ve interacted with for violating our policy against misinformation about COVID-19 that leads to imminent physical harm.

- Once they click on the notification, they will see a thumbnail of the post, and more information about where they saw it and how they engaged with it.

- They will also see why it was false and why we removed it (e.g. the post included the false claim that COVID-19 doesn’t exist)

- People will then be able to see more facts about COVID-19 in our Coronavirus Information Center, and take other actions such as unfollowing the Page or Groups that shared this content.

Facebook users can now expect notifications regarding removed COVID-related posts that they've liked or otherwise interacted with, for better transparency. Facebook will also point out why exactly a post was removed (for example "We don't allow claims that COVID-19 doesn't exist").

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: