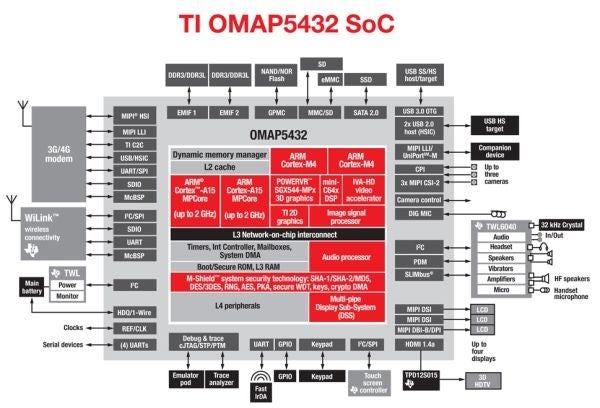

TI shows off its dual-core Cortex A15-based OMAP 5 beating a quad-core Cortex A9 device

Even kids know that quad-core is better and faster than dual-core, but there’s one exception - if the two are on different architectures and TI’s OMAP 5 chip proves exactly that. The company used its dual-core Cortex-A15 based chip to blow your illusions about quad-core being the alpha and omega of upcoming devices.

Here’s how: the TI OMAP 5 chip featured two Cortex A15 cores clocked at 800MHz and coming with some tweaks and accelerations, and were put against a commercial device powered by a quad-core Cortex-A9 based design with each of the cores running at 1.3GHz. As you can see in the video below, the TI OMAP chip knocked the socks off the quad-core chip in a web page loading contest. Both devices were programmed to render web pages while downloading videos and playing MP3 files at the same time.

The end result for TI OMAP was 95 seconds for 20 web pages, while the quad-core chip took more than twice - 201 seconds. Now, TI doesn’t specify what’s the quad-core device used, but we only know of Tegra 3 devices like the Asus Transformer Prime (the Prime cores though are clocked at 1.4GHz), so our guess is that TI compares its OMAP to the Tegra 3.

What do you think about the comparison - is it valid or could network congestion have flawed it? Are you excited about Cortex A15 designs? Sound off below.

source: AnandTech

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: