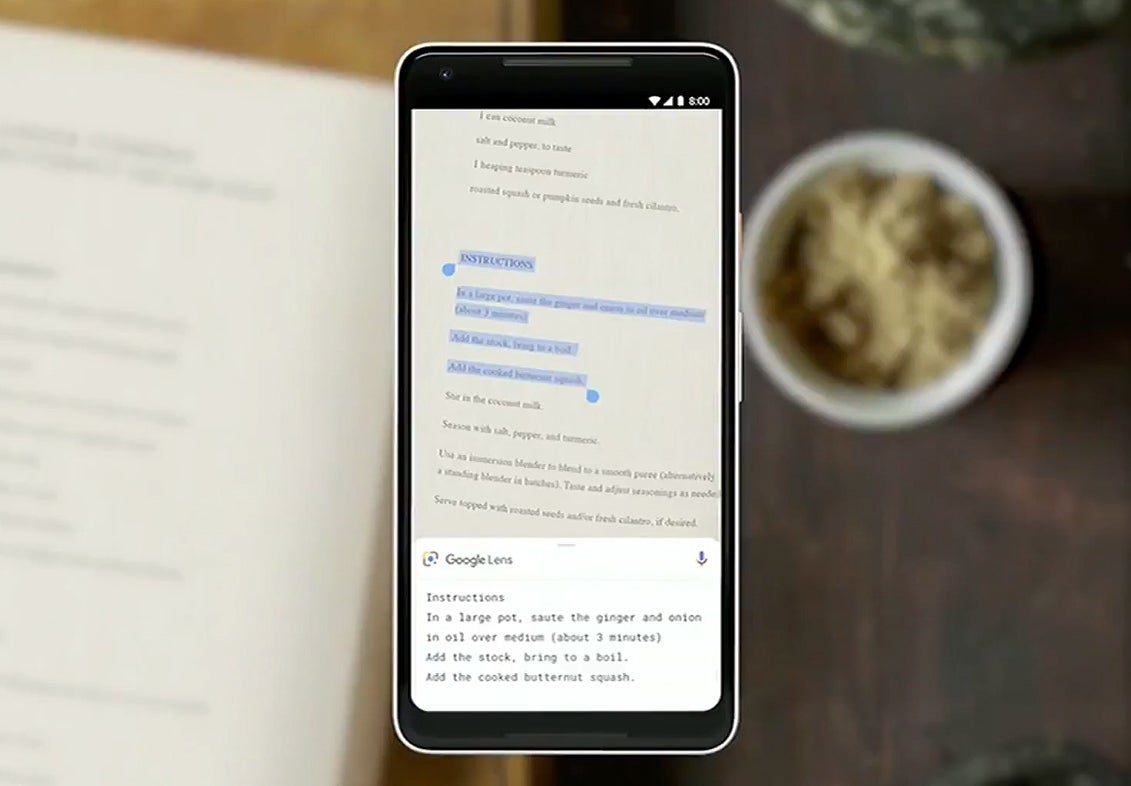

Google Lens will be integrated in the cameras of future Android phones

One of the exciting features that was demoed at Google I/O 2018 showcased Google Lens's abilities to recognize text in images. In a similar fashion to Google Translate, using OCR (optical character recognition), Lens allows users to scan words from an image and paste it on their device in editable, text form.

"Style Match" was another upcoming feature shown off at I/O 2018 that allows Lens to scan images of clothes find other articles of clothing and accessories that would go along well with the source material.

Google's ultimate vision for Lens, however, far exceeds all of these examples. The idea is that, eventually, Google Lens will be "like a visual browser for the world around you," says Aparna Chennapragada, vice president of product for AR, VR, and vision-based products at Google. This means just firing up your camera app (that will already have Lens built-in) and just pointing it at something. The goal is to have Lens automatically start scanning your surroundings and surfacing relevant information about what it's seeing.

Expect these features and more to roll out to Google Lens in the coming months.

Things that are NOT allowed:

To help keep our community safe and free from spam, we apply temporary limits to newly created accounts: